#knowledgesharing

Wir geben unser Wissen weiter: Mit unseren Knowledgesharing-Artikeln bündeln wir unser gesamtes Cloud-Know-how in spannenden Fachbeiträgen. Damit auch du von unserer Expertise lernen kannst.

AWS

Die wichtigsten Erkenntnisse aus der Sicherung von APIs in AWS

AWS Lambda

Data Masking of AWS Lambda Function Logs

AWS

MFA-Anforderung für AWS-Root-Benutzer

Openshift

Ein Beispiel für den Einsatz von GitOps zur Bereitstellung einer modernen Anwendungsentwicklungsplattform

HYCU

Vereinfache deinen Datacenter Fussabdruck im hybriden Cloudzeitalter

HYCU

HYCU Data Protection bietet resilientes Backup-Management im hybriden Cloudzeitalter

HYCU

Digitale Transformation mit HYCU und Nutanix

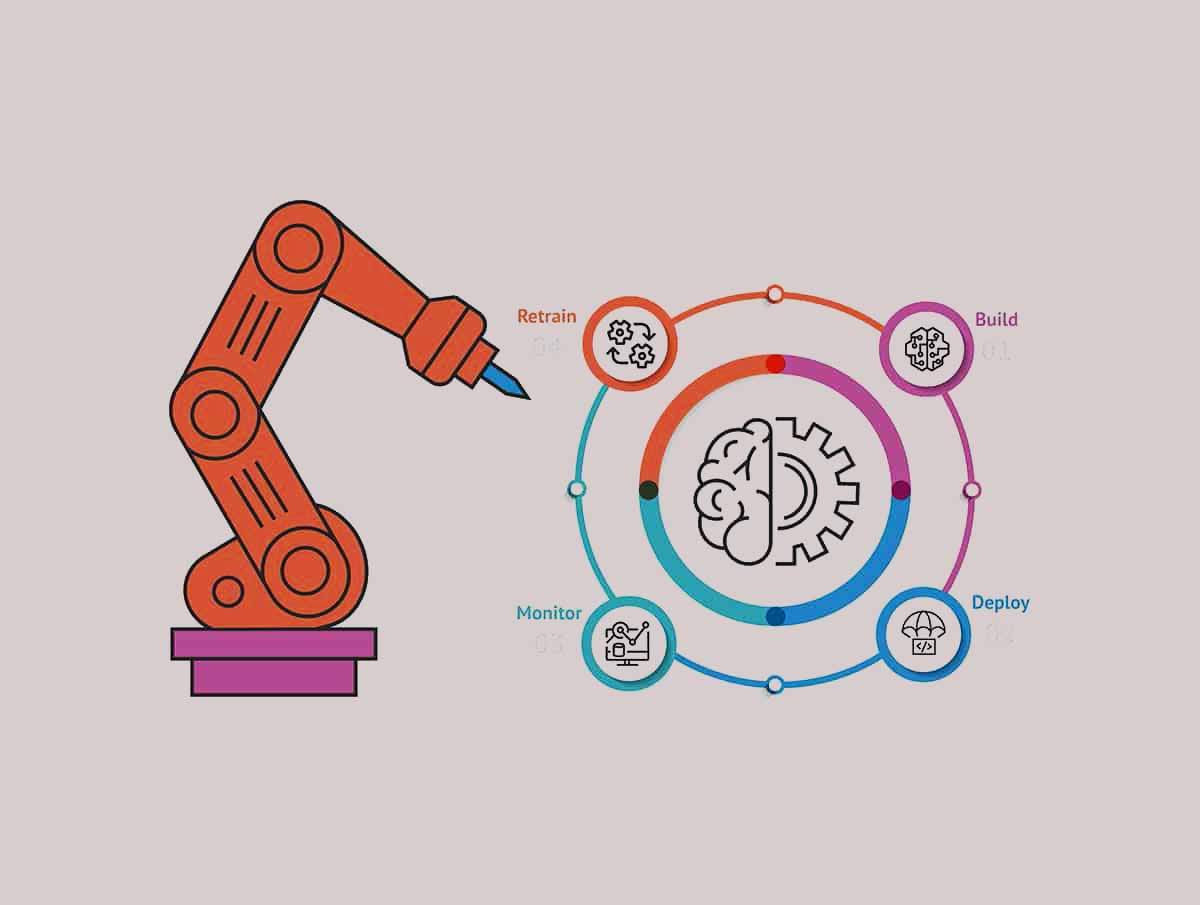

MLOps-Pipeline – Teil 2

Vollautomatisierte MLOps-Pipeline – Teil 2

MLOps-Pipeline – Teil 1

Vollautomatisierte MLOps-Pipeline – Teil 1

Amazon SageMaker Feature Store

Nahezu Echtzeit-Dateneingabe in den SageMaker Feature Store

AWS AppConfig

AWS AppConfig for Serverless Applications Demo

Containerisierung

Baue eine Cloud-native Plattform für Deine Kunden

AWS Data Lake

RDBMS Data Ingestion

AWS Lambda

Comparing AWS Lambda on ARM vs x86 architectures

E-Book

Top 20 Private Cloud questions answered

Disaster Recovery Plan

Wie genau funktioniert die Datenreplikation?

Green Cloud Computing

What can each of us do for a greener cloud?

Mulit-Cloud vs. CAF

Das AWS Cloud Adoption Framework erklärt.

Cloud Migration

AWS Landingzone Framework

Cloud Native

Wie wird eine Cloud Native Infrastruktur garantiert?

Case Study – Raiffeisen

Automatisierung im Übersetzungsprozess

MACHINE LEARNING IN DER PRAXIS

Whitepaper: Die natürlichen Chancen künstlicher Intelligenz

CASE STUDY – SBB

The connectivity problem

Training eines Models

Machine Learning in der Praxis

von techie zu techie

Cloudcademy Bootcamps

Mit unseren Bootcamps, Zertifizierungstrainings und Workshops bieten wir dir verschiedenste Veranstaltungen, in denen du dein Cloud-Wissen weiter ausbauen kannst. Melde dich noch heute für unsere kostenlosen Cloudcademy-Bootcamps an!

Webcasts

webcast

Reinforcement Learning 101 - What is Artificial Intelligence?

webcast

AWS kommt in die Schweiz

webcast

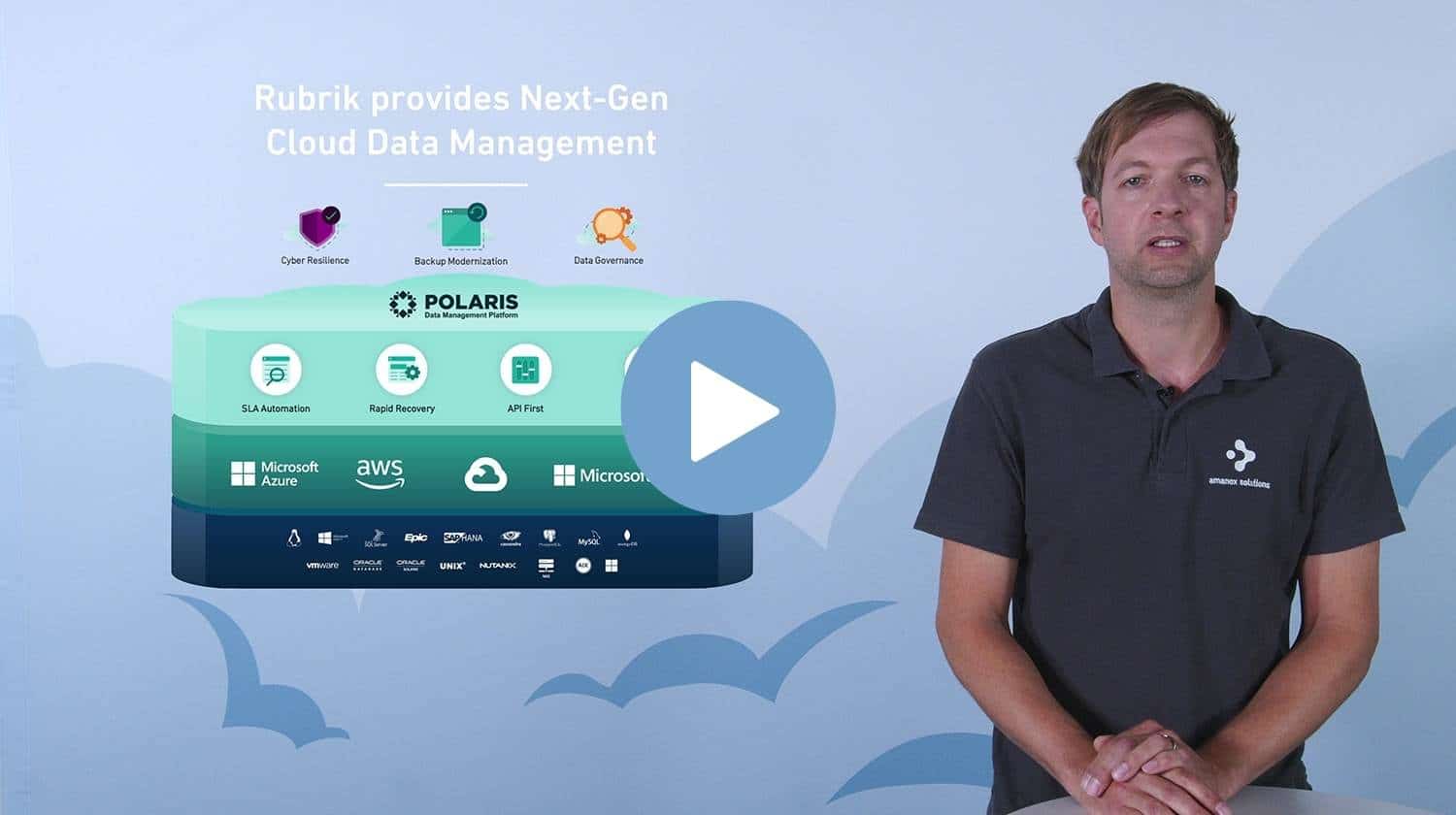

Don't backup, go forward

von Andreas Wenger

Tauche mit Andreas in die Backup-Lösung von Rubrik ein. Er erklärt dir das Dashbord, wie du deine SLA-Backup-Policies modernisiseren und automatisieren kannst und wie dich Ransomware Protection vor Angriffen schützt.

webcast

Das neue Homeoffice

webcast

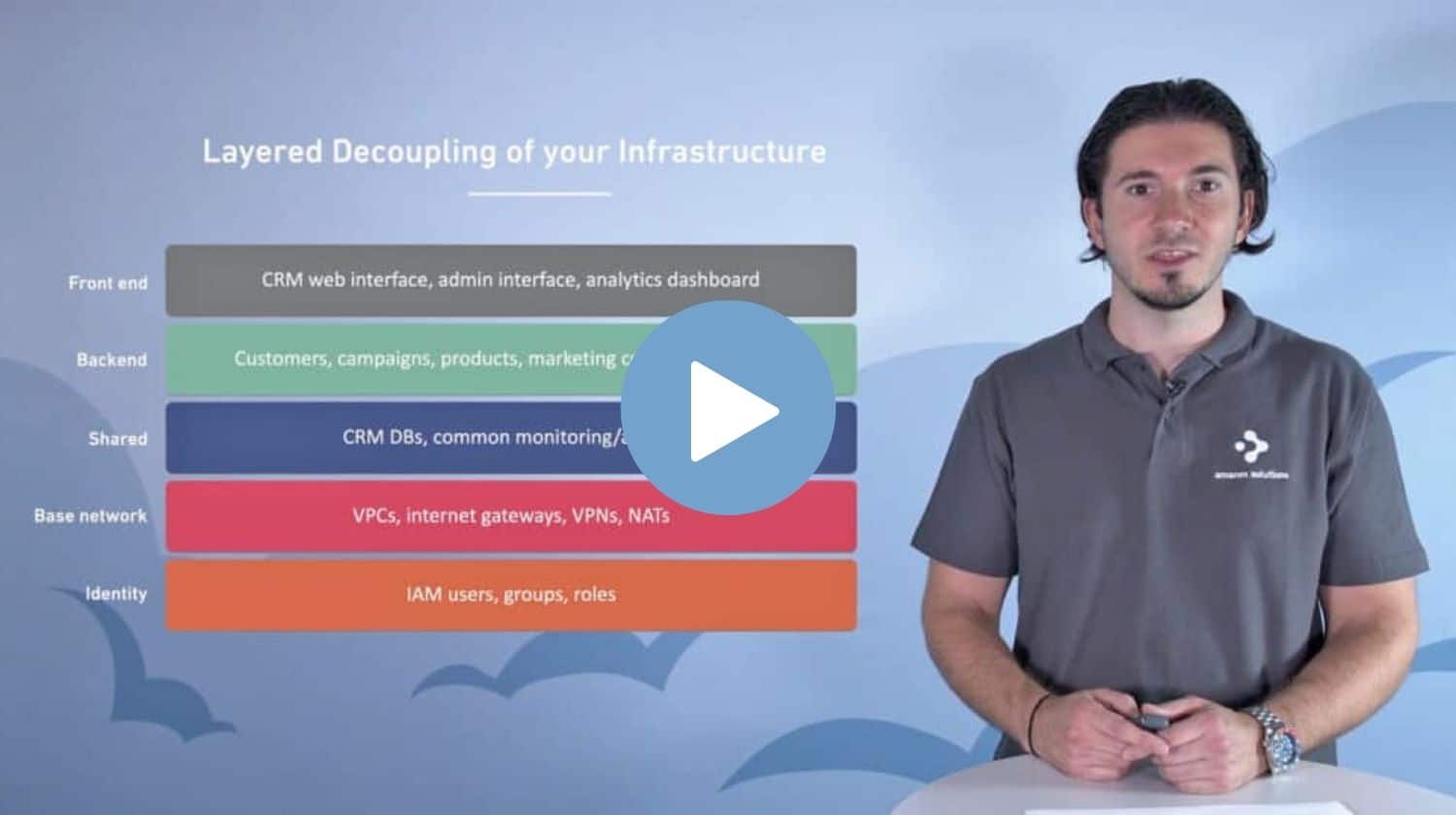

AWS Serverless Landing Zone

von Dino Bektas

Demo-Video

Skaliere deine Serverkapazität mit der Hybrid-Cloud

Nicht genug Serverleistung für dein Weihnachtsgeschäft? Erfahre in unserem Demo-Video, wie du deine Serverleistung für kurze Zeit erhöhen kannst.

Lass die funken fliegen

Hast du Fragen?

Unsere erfahrenen Amanoxians beantworten dir gerne

deine Fragen und stehen dir beratend zur Seite.